Thread 'PCI express risers to use multiple GPUs on one motherboard - not detecting card?'

Message boards : GPUs : PCI express risers to use multiple GPUs on one motherboard - not detecting card?

Message board moderation

Previous · 1 · 2 · 3 · 4 · 5 · 6 · 7 . . . 20 · Next

| Author | Message |

|---|---|

|

Send message Joined: 8 Nov 19 Posts: 718

|

That sounds like the sort of device I helped Eric install in Muarae2 in the summer - 4 M.2 SSDs on a single PCIe card, lying flat in a 1U server case. Very fiddly. I doubt that would work on the end of a riser cable. Mind you, I think Eric is still having difficulty getting it to work in the datacenter.Since there aren't too many PCIE 4.0 devices out...But for all AMD GPUs available now, RX 5500, 5600 and 5700 series. Intel added support to its Optane SSDs. Asrock and Asus have launched own versions of M.2 cards in which you can slot up to 4 M.2 SSDs. There's enough PCIe 4.0 around, if only you care to look. Yes, I think that many 'PCIE 4.0' devices out there nowadays, are operating at 3.0 speeds. Some (SSDs) might actually benefit from the PCIE 4.0 speeds, but only because they're connected on a 1x slot (unless you're talking about performance hardware, which go for several hundred dollars a piece, and not beneficial for Boinc. If you use Linux, PCIE 4.0 only makes sense if you want to plug a GPU that's equal to an RTX 2080, in a PCIE 4.0 1x slot. Nvidia GPUs use PCIE 3.0, so they won't benefit from it; and even the fastest AMD GPUs aren't fast enough to be bottlenecked by PCIE 3.0 speeds; they should see less than a percent or two performance increase on PCIE 4.0 over 3.0 (if they truly support it). Considering that you can get the full speed of a 2080Ti running Boinc or Folding out of a PCIE 3.0 4x slot (possibly even a 2x slot), PCIE 4.0 makes no difference on AMD GPUs. Not even in Windows. |

Jord JordSend message Joined: 29 Aug 05 Posts: 15648

|

Nvidia GPUs use PCIE 3.0, so they won't benefit from it; and even the fastest AMD GPUs aren't fast enough to be bottlenecked by PCIE 3.0 speeds; they should see less than a percent or two performance increase on PCIE 4.0 over 3.0 (if they truly support it).You have a link to an article following up on your claim? Or is this something you think, without substantial proof either side? Considering that you can get the full speed of a 2080Ti running Boinc or Folding out of a PCIE 3.0 4x slot (possibly even a 2x slot), PCIE 4.0 makes no difference on AMD GPUs.Again, link to an article or review? By the way, lane-speed of PCI-Express is measured in xN, not Nx. The small x stands for the amount of lanes, x1, x4, x8, x16, x32. As for articles, I did find https://www.techpowerup.com/review/nvidia-geforce-rtx-2080-ti-pci-express-scaling, which tested an RTX 2080 Ti with a lot of games, against all available PCIe slots. Seeing how these beasts are made for gaming in the first place, not GPGPU calculations. And then gaming at very high resolutions. 8K TVs and monitors are already available. Insanely expensive maybe, but available. So while none of the GPUs may overwhelm a 360Hz monitor running at 1920x1080, that's going to be different running at 7680 × 4320 pixels. Also remember that these 'standards' are brought out with the thought that 'in the future' a manufacturer will bring something on the market that uses part of or the full bandwidth of the PCIe slots. Plus Nvidia uses it in their inter-card communication bridge https://en.wikipedia.org/wiki/NVLink, where two RTX or Quadro cards will run in tandem for speeds up to 25GB/s which make PCie 4.0 needed (max x16 speed 31.51GB/s). |

|

Send message Joined: 24 Dec 19 Posts: 241

|

it depends on the application of course. SETI may not use enough bandwidth to bottleneck even an RTX 2080ti on a PCIe 3.0 x1 link, but another application like Einstein or GPUGrid might need a lot more. So you really have to look at what is trying to be run first. but he's right at least that nvidia does not (at the moment) have any PCIe 4.0 capable GPUs. even on a PCIe capable board, the lanes will run at 3.0 mode. |

Joseph Stateson Joseph StatesonSend message Joined: 27 Jun 08 Posts: 642

|

I had mixed results with risers on old motherboards and especially those 4-in-1 risers. An older X8DTL (1366 socket) required a board in the X16 slot to install Ubuntu 18.04. After installing ubuntu I was able to replace it with a riser. A 4-in-1 riser only showed one board when more than 1 ATI was used so I never got more than 4 boards to work. my TB-85 (8 slot) worked fine with 8 risers, all gtx1060, ubuntu 18.04. For a "seti wow event" I temporarily added first a gtx1070 and then a 1070Ti. Things quickly went south, probably because of the different mix of boards. I would see the following about twice a week "Unable to determine the device handle for GPU 0000:01:00.0: GPU is lost. Reboot the system to recover this GPU**"In addition, the fan sensors frequently reported "ERR" instead of RPM. I saw that "reboot" message daily when I added the second "extra" board. I tried a 2nd splitter thinking that keeping similar boards on the same splitter would help. I ended up getting an H110BTC that has 12 x1 slots and the 4-in-1s are in the scrap pile. The TB85 had settings for lane speed and I tried a lot of variations where I set the lane speed to spec 2 for the slot that had the 4-in-1 but eventually left it all as "default" as things got even worse. The problem I have now with TB-85 and H110BTC are projects like Einstein and GPUgrid that use almost a full CPU while SETI and Milkyway use a small fraction. My gen 6 & 7 CPUs only support 8 threads so there is a problem on the H110BTC as I cannot feed Einstein fast enough and 10-minute work units stretch to 30+ minutes with 9 boards. I solve this by limiting the number of concurrent tasks and reporting fewer GPUs to the project than I have. ** I created a program that shutdown the GPUs and reports using a text message here but I have not had a problem since I quit using those 4-in-1 risers and I have a mix of 1660, 1060, 1070, p102-100, p104-100, p104-90 and all work fine. I had to do this because more often than not, the work units would "time out" and another job was assigned and very quickly I would have 100's of errored out tasks. |

Joseph Stateson Joseph StatesonSend message Joined: 27 Jun 08 Posts: 642

|

I finally received the x1 to x16 USB risers. I don't yet have the 4 way version, it's in the post. I ran SETI on P5K, P5E and P7N using core-2-quad for years and gave most away. I did get one down from the attic that had had a lot of x1 slots and tried risers but the problem was the CPU, not the risers when adding more GPUs and more so on windows. One test I would like to run but I no longer have socket 775 boards would be run a load test on Einstein to see if the problem is the number of boards: Using a core 2 duo, run 2 concurrent tasks on 2 boards and compare that to 1 task each on 4 boards. I have been wondering if dedicating a core to a single board with 2 tasks is more efficient than 2 cores allocated to 4 boards. [edit] Forgot to mention in my earlier post: I bought a second set of 1-16 risers from the same company as the first set. The new purchase came with a warning that the manufacturer had released a number of risers that had the polarity reversed on the capacitors. He included a picture of an incorrect assembly: the shaded top 1/2 at the top of the capacitor was not on the same side as the colored design on the board where it was soldered. This would mean the + and - were reversed. The seller said to return any defective to him for replacement. I went and checked all my risers and all were ok. |

|

Send message Joined: 8 Nov 19 Posts: 718

|

it depends on the application of course. SETI may not use enough bandwidth to bottleneck even an RTX 2080ti on a PCIe 3.0 x1 link, but another application like Einstein or GPUGrid might need a lot more. So you really have to look at what is trying to be run first. Most, if not all projects work in a similar way. They use about 1 fraction of a second out of a few seconds to offload data from the CPU to the GPU, and reverse. While I have no 'official documentation', which means absolutely nothing, I do have about 1 year of experience observing the phenomenon in Folding, Boinc, Bitcoin mining, and Games. |

|

Send message Joined: 8 Nov 19 Posts: 718

|

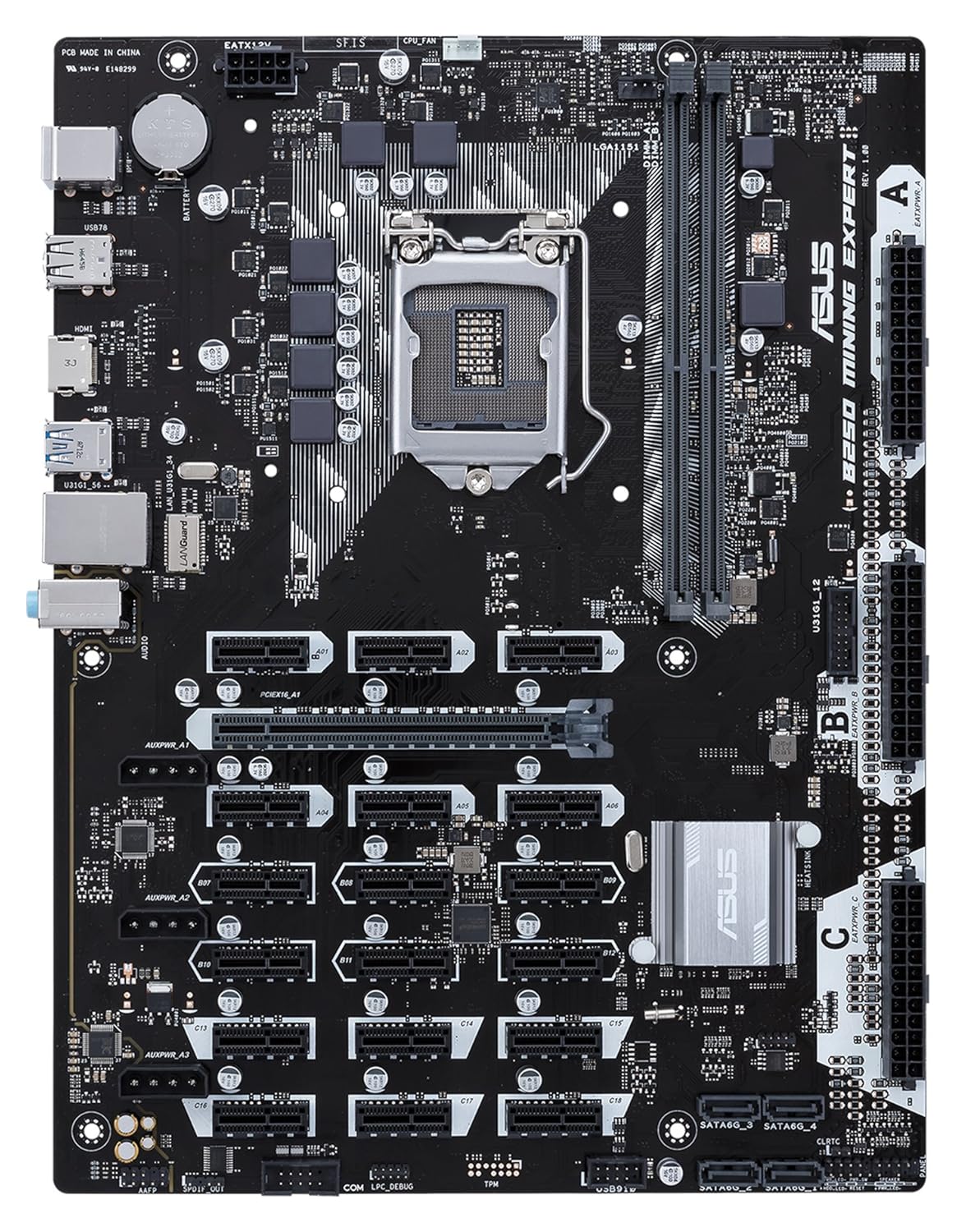

Just tried both 280x GPUs on USB risers, both onto PCI x1 slots. The BIOS doesn't recognise a card in an x1 slot as a graphics card - no display to the monitor, but once Windows boots it works. - The motherboard you posted specs of, states it's a PCIE 2.0 x1 slot, not a PCIE 1.0 slot (meaning 2.0 speeds, x1 sized slot. - You can not daisy chain PCIE splitters infinitely. Once you use one, one PCIE 1x slot gets divided into 4. Not all motherboards support this. It will 'borrow' 4 PCIE lanes from somewhere else. Once you surpass the maximum of 24 PCIE lanes, your bios will just tell you you have more PCIE lanes than your CPU can assign, and those extra PCIE lanes won't work. Do know that for most Intel CPUs with IGP, 4 PCIE lanes are used up for the IGP. a few more, for USB HUB, and SSD controller, and possibly more for Wifi. Also your first PCIE slot, will have a minimum and a maximum PCIE lanes pre-assigned. It says 16x 16x, but more than likely, it'll fall back to 8x 8x. Both slots may do 16x individually, but the CPU doesn't have enough resources to put out 32 PCIE lanes for GPU only. So discount all PCIE lanes assigned to IGP (your CPU doesn't have IGP, in which case those 4 lanes are unused, and I can't confirm, but believe they can't be reallocated; they're just 'lost', or 'disconnected'), USB, SATA, etc... and most motherboards will be limited to 16, 20, or 24 PCIE lanes. That means, if your first two GPUs can use 16 lanes each (they will be assigned 8 per GPU), you'll have either nothing, 4, or 8 PCIE lanes left to work with. Add a third GPU in a full size slot, and that number might go down by 4. If you're planning on running many GPUs (make sure your wall outlet can provide enough power for them, and your PSU is powerful enough), you might benefit from buying a $70 mining board like this one:  |

|

Send message Joined: 5 Oct 06 Posts: 5149

|

I do have about 1 year of experience observing the phenomenon in Folding, Boinc, Bitcoin mining, and Games.BOINC doesn't use the GPUs: each separate (GPU using) project uses the GPU in its own way. You can't generalise from one project to another. GPUs have been used by BOINC projects for over 10 years now, and the way they are used has changed over time. |

Joseph Stateson Joseph StatesonSend message Joined: 27 Jun 08 Posts: 642

|

I suspect you can have an infinite number of "bus id's" but, as suggested by pro digit, if a unique lane must be associated with each "bus id" then there is a limit. On the other hand, if the driver is smart enough, it could use the same lane for all the traffic to the multiplexer (the 4-in-1) but that is a guess as I have no knowledge of the workings of the multiplexer. Looking at this and assuming it is not "fake news" one would think that 104 boards on risers would need 104 lanes. https://videocardz.com/newz/biostar-teasing-motherboard-with-104-usb-risers-support-for-mining |

|

Send message Joined: 24 Dec 19 Posts: 241

|

this is flawed testing methodology unfortunately. you need to run these tasks on an offline benchmark tool to control the test. run the SAME WU job (not every WU will take the same time to process), and change ONE variable (which slot it's plugged into), and then compare the resulting processing time. that will tell you if it's running full speed or not. I see a noticeable speed difference on seti between a PCIe 2.0 x1 link and a PCIe 3.0 x1 link when you hold all other variables static. GPU utilization alone is not a proper way to gauge if the jobs are running at the same rate. |

|

Send message Joined: 24 Dec 19 Posts: 241

|

where? the specs list PCIe 2.0 x16 and PCIe x1. no where do the specs or manual say that his x1 slots run at 2.0 speeds. There's a reason they said the x16 slots are 2.0 and didnt mention it on the x1 slots, because the x1 slots are NOT 2.0. if they were, the marketing department would have made sure to advertise it as such. when PCIe came out, it was was just called PCIe not PCIe 1.0. when they released further generations of the technology they began labeling it as 2.0/3.0/4.0/etc so the consumer can easily know what they are getting. So discount all PCIE lanes assigned to IGP (your CPU doesn't have IGP, in which case those 4 lanes are unused, and I can't confirm, but believe they can't be reallocated; they're just 'lost', or 'disconnected') iGPU doesn't take away from the CPU PCIe lanes. the iGPU doesnt use the PCIe bus at all. it's connected right to the CPU. its always good to look at the block diagram:  |

|

Send message Joined: 24 Dec 19 Posts: 241

|

look at the information I posted in my previous comment. and look at the block diagram for your board that i also posted. you have 2x PCIe 2.0 x16 slots and 2x PCIe 1.0 x1 slots. when the documentation "doesn't list" the generation and only says PCIe, that means it's 1.0. you will never be able to "infinitely" daisychain those splitters. even if you didnt run into communication issues over the PCIe bus, the motherboard will not be able to map all of the VRAM. such old boards just can't handle it. "lane sharing" does take place with the 4-in-1 splitters via a PLX switch on a single lane. it's constantly cycling through the different inputs, only allowing the data from one card at a time. it does not send all data from all cards over the bus at the same time. when you daisy chain them you will run into communication issues undoubtedly. |

Copyright © 2025 University of California.

Permission is granted to copy, distribute and/or modify this document

under the terms of the GNU Free Documentation License,

Version 1.2 or any later version published by the Free Software Foundation.